Start With a Monolith. Split It Only When You Have To

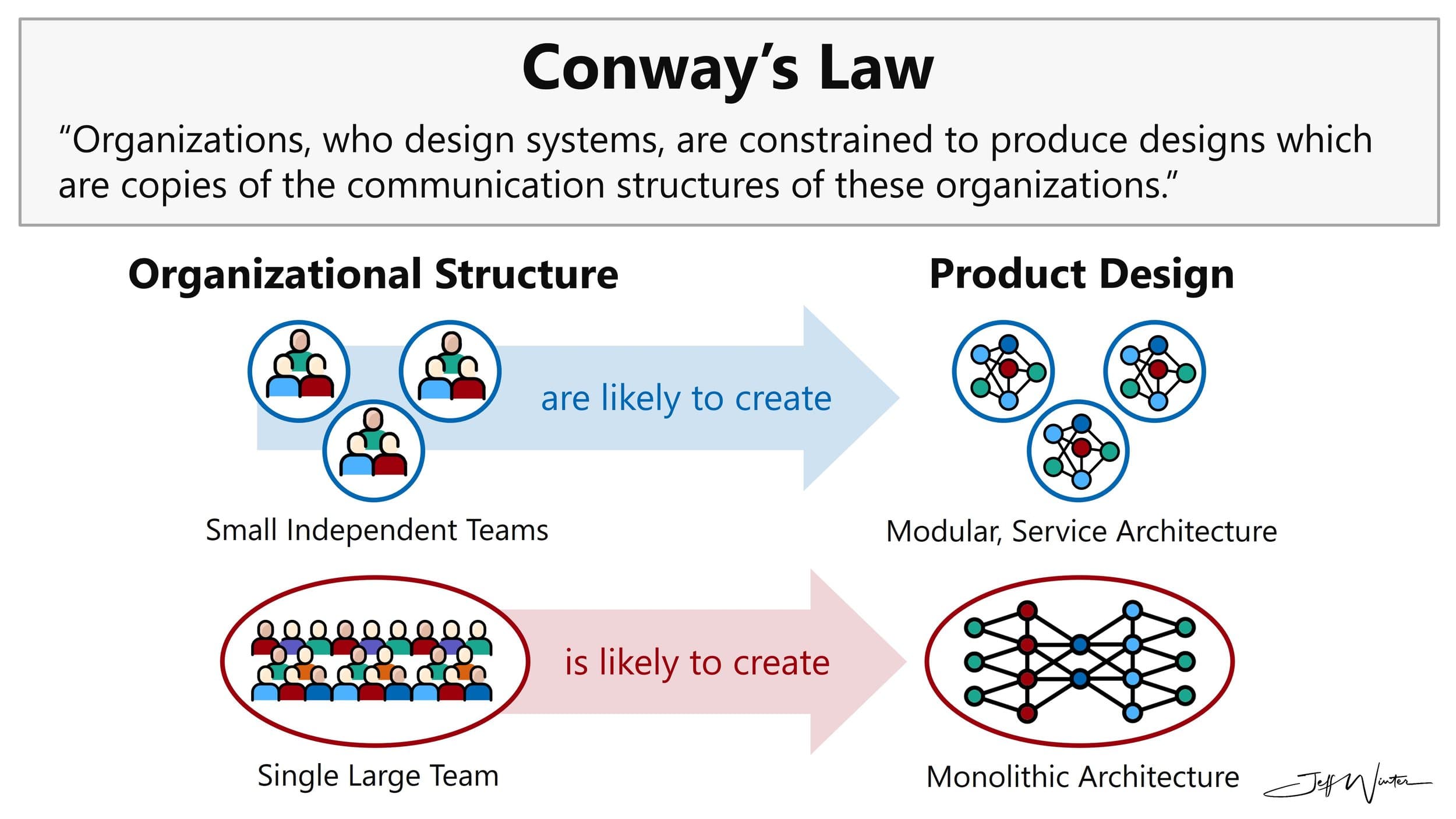

Conway's Law, the microservices premium, and why team structure should shape architecture — not the other way around.

Conway's Law says systems end up mirroring the communication structure of the teams that build them. A small team produces a unified system; a distributed organization produces distributed software. When I see a five-person team running 60 microservices, I see a team fighting that law — and losing.

That observation shapes every architectural decision I make on a new project. The shape of my system should follow the shape of my team, not the other way around.

Most teams over-engineer day one and pay for it for years. I've worked on projects that turned into maintenance nightmares — some eventually rescued, but only after burning serious time and money. The technical debt followed them long after. It doesn't have to go that way.

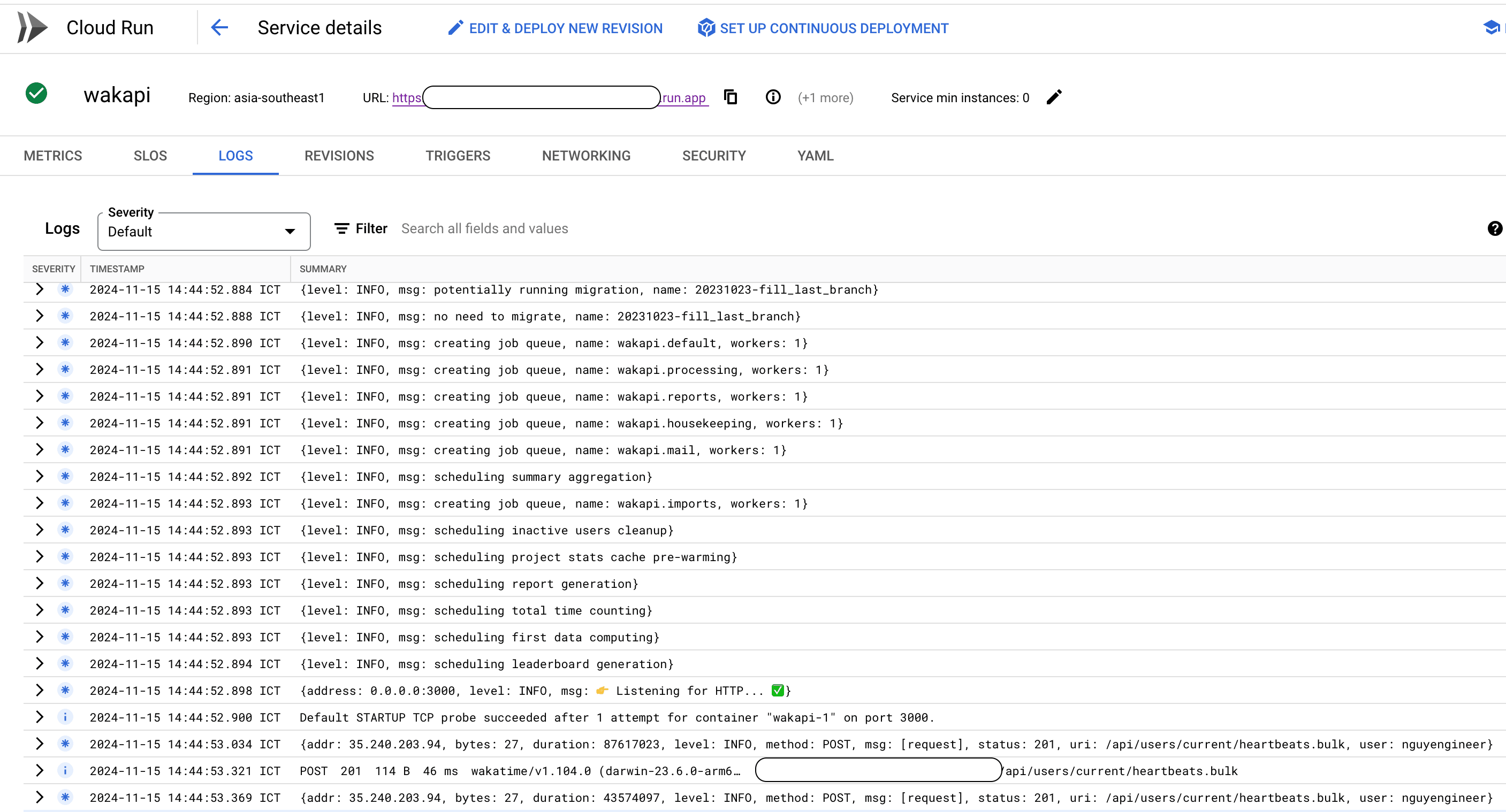

When I'm building something new with a small team, I focus on functionality, not scale. A vertically scaled, stateless server behind a good proxy like Traefik gets me much further than I once thought possible. Here's the architecture I reach for today.

My Majestic Monolith

Five principles I treat as non-negotiable:

1. Clean architecture from day one. I keep my business logic independent of my infrastructure. It's just separation of concerns, but the payoff compounds. When I eventually need to extract a service — when team structure or workload shape demands it — only the infrastructure layer changes. The core logic runs the same whether it lives in a VM, a Kubernetes pod, a Lambda, or a Cloud Function.

2. One capable server. I pick a runtime that can fully saturate the hardware I'm paying for and run uninterrupted for months. For a long-lived monolith, I look for efficient use of multiple cores under sustained load, predictable memory behavior over weeks of uptime, and a mature concurrency model. Different languages meet that bar in different ways — I choose one my team can operate confidently for years, not just ship the first feature with.

3. A managed cloud database. Postgres or MongoDB cover almost every use case I've encountered. I let the cloud provider handle scaling, and I sidestep the "shared database vs. database-per-service" debate entirely, because I don't have services yet.

4. A stateless server. Single-server doesn't mean single-instance. When a hot spot emerges — say, the user domain — I spin up more instances of the same codebase, point the load balancer at them, and route that domain's traffic to those instances. I've effectively got a dedicated service without rewriting anything. Token-based auth means any request can land on any instance, so I skip sticky sessions entirely.

5. A push-based message bus. Async work doesn't require a fleet of pods polling a queue. I keep the business logic inside the core, expose it as an API, and configure pub/sub to push messages to that endpoint. Long-running handlers process the work and acknowledge the message.

This gives me message ordering, natural backpressure (the broker slows down when my service is under load), and minimal operational overhead. If a workload is genuinely heavy, I run it on a separate instance of the same monolith dedicated to async tasks.

Why Most Microservices Are Unnecessary

Across multiple projects, I've seen the same pattern: most microservices don't earn their keep — usually because they were created without an organizational reason to exist. They were a copy of someone else's architecture, not a reflection of the team that owns them.

An in-process function call is orders of magnitude faster than an HTTP call, and it avoids network latency, retries, and the long tail of distributed-system failures. Building those failure modes into the system before they're justified is the microservices premium I'm not willing to pay early.

In my experience, code is rarely the bottleneck — the database is. Most API work is data manipulation, waiting on a query, or waiting on a third-party service. Time I'd spend scaling microservices is usually better spent scaling the database.

When a Microservice Actually Earns Its Keep

Two things justify carving out a service:

Resource isolation. Some workloads will starve the rest of the system of CPU or memory if they share a process:

- Email composition, HTML rendering, PDF generation

- Image and video processing

- AI model inference

Team boundaries. Once a domain is owned by a separate team with its own release cadence, decisions, and on-call rotation, Conway's Law catches up. Forcing two teams to coordinate inside one codebase creates more friction than running two codebases. That's the moment to split — not before.

Scaling the Organization, Not Just the System

When I'm leading multiple teams, I still don't start with microservices. I split along domain boundaries first — Conway's Law applied deliberately. Once a team owns a domain, the architecture inside it is their decision; I only care about the contract they expose.

The monolith doesn't survive forever, and that's fine. It survives as long as the team structure justifies it. When the org grows, the system grows with it — but along the seams the team has already drawn, not arbitrary lines I imagined on day one.

My goal is delivering value with the least effort possible, with an architecture that matches the team I have — not the team I'm imagining I'll have someday.